The Latest in AI Marketing & Sales Tech

Real updates, not press releases. We track emerging AI trends, new product features, and research that shapes the future of business automation.

2026 Churchill Scholar biological engineering: Katie Spivakovsky’s AI-bio path to Cambridge

MIT senior Katie Spivakovsky has been named a 2026–27 Churchill Scholar and will pursue an MPhil in biological sciences at the University of Cambridge’s Wellcome Sanger Institute, advancing work at the intersection of AI and bioengineering.

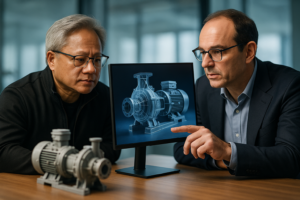

NVIDIA and Dassault Partner on an Industrial Virtual Twin Platform

At 3DEXPERIENCE World, NVIDIA CEO Jensen Huang and Dassault Systèmes CEO Pascal Daloz outlined a long-term plan to fuse high-fidelity virtual twins with accelerated computing and generative biology—positioning physics- and science-grounded “Industry World Models” as a trusted system of record for industrial AI.

Nemotron document intelligence: GPU-Powered Agents Turn Documents Into Real-Time BI

Nemotron Labs outlines how open models and GPU-accelerated libraries enable intelligent document processing that transforms static, multimodal files into structured, actionable intelligence. The result: agentic workflows that move beyond OCR and basic RAG to support secure, real-time decisions at enterprise scale.

AxiomProver AI theorem prover Breakthrough: Four Open Math Problems Solved

Axiom says its hybrid AI mathematician produced Lean‑verified solutions to four previously unsolved problems, including a novel proof of the Chen–Gendron conjecture. Here’s why a machine‑checked approach could reshape research and high‑stakes industries.

Build with Kimi K2.5 on NVIDIA GPU endpoints

Kimi K2.5 is a frontier-scale multimodal VLM now available on NVIDIA GPU-accelerated endpoints, enabling fast prototyping, high-throughput serving, and enterprise fine-tuning. Here’s how to get started and what to know about its MoE architecture, vision stack, and deployment paths.

How ChatGPT Health for patients Helps People Navigate Care

Patients are increasingly turning to generative AI to make sense of diagnoses, test results, and care options. Here’s how ChatGPT Health fits into patient education and clinic workflows—plus the benefits and limits leaders should weigh.

Latest AI News & Insights for Marketers

Stay ahead of the curve with weekly AI breakthroughs, product launches, and updates that impact how marketing and sales teams work.

What You’ll Find in These AI News Briefs

Essential AI news distilled into clear, actionable insights for growth-focused teams.

Why Our AI News Briefs Matter

- Curated from 100+ verified industry sources

- Focused on marketing and sales impact — not hype

- Summarized weekly in plain English

- Independent and ad-free coverage

📰 Want AI news that actually matters?

Get one concise weekly roundup of AI updates that affect your business.

Trusted by 5,000+ pros automating smarter.

No spam. Unsubscribe anytime.