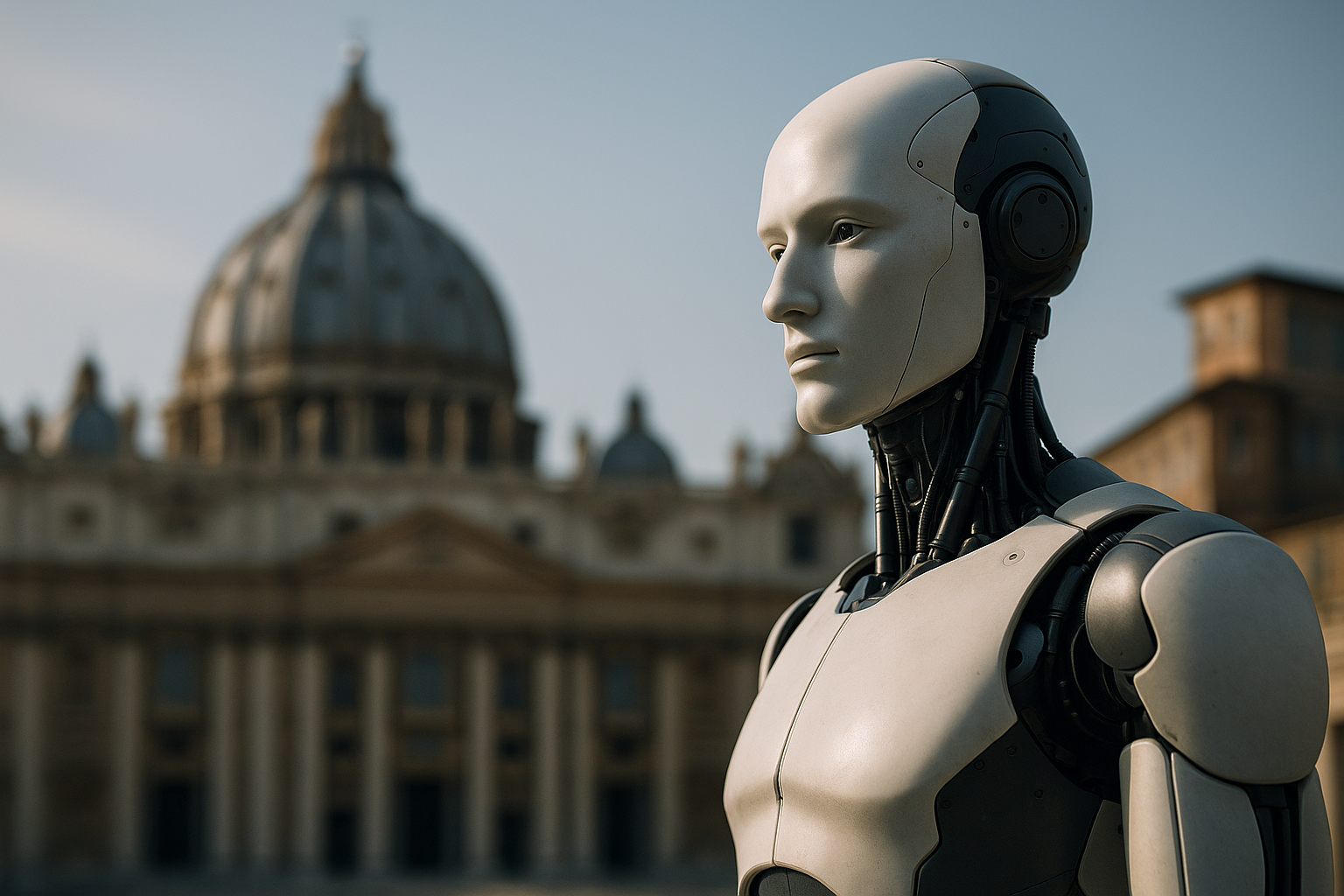

Vatican AI ethics: Rome Call, guidelines, and why businesses should care

Introduction: Why this matters now

AI is reshaping products, jobs, and public trust—and the Vatican’s view brings an unusually clear moral lens. At the heart of Vatican AI ethics is a conviction that AI can be a gift to humanity while also posing risks to dignity, work, and social justice. That framing is now operational: the Vatican has championed the Rome Call for AI Ethics and issued binding city-state rules that model how institutions can deploy AI without outsourcing human judgment—critical for business leaders, policymakers, and compliance teams alike [1][2][4][5][6].

Vatican AI ethics in brief

The Rome Call for AI Ethics sets six principles: transparency, inclusion, responsibility, impartiality, reliability, and security. It emerged from Vatican-hosted dialogue and has attracted endorsements from major technology companies, UN agencies, governments, and civil society—and it even spurred interreligious collaboration among Abrahamic faith leaders. The Call presents a human-centered vision that seeks to align AI with the common good rather than purely technical performance metrics [1][2].

The Rome Call for AI Ethics: principles and reach

Pragmatically, the Rome Call urges developers and deployers to ensure explainability and accountability (transparency, responsibility), to mitigate bias and ensure fairness (inclusion, impartiality), and to prioritize dependable systems with robust protections (reliability, security). Its broad uptake signals growing consensus that governance must address not just model accuracy but also social impact and human dignity [1][2].

Paolo Benanti and ‘algor-ethics’

Franciscan friar and engineer Father Paolo Benanti—advisor to Pope Francis and member of the UN AI advisory body—coined “algor-ethics” to capture the moral stakes of algorithmic decision-making. He argues the core problem of AI is complexity: algorithms reshape how information is filtered, how work is organized, and how relationships are mediated, often intensifying polarization. Benanti presses for a multidisciplinary response rooted in Catholic social teaching; technical fixes alone cannot answer questions about the roles of humans and machines in society [1][2][3].

Vatican City State’s binding AI guidelines

The Vatican City State has implemented enforceable rules for its own offices. The guidelines allow AI to support—but not replace—human judgment, with strict limits in judicial contexts: AI can help organize research, but it cannot interpret the law or determine sentences. Prohibited uses include systems that discriminate, manipulate people subliminally, harm vulnerable individuals, worsen inequality, or jeopardize security or fundamental rights. The rules also set sector-specific expectations for data processing, healthcare, cultural heritage, administration, and security. Notably, the Governorate asserts attribution and economic rights over certain AI-generated content produced within Vatican territory, including controls over content related to the Pope and the Church [4][5][6].

How it stacks up with secular regimes

While distinct in its explicitly faith-informed, anthropocentric framing, the Vatican’s stance overlaps with secular regimes that emphasize human oversight and rights protections. Its approach aims to shape global AI governance while modeling internal practice—converging with values-based regulation but grounding that convergence in dignity, work, and the common good rather than in compliance mechanics alone [5][6]. For a broader policy backdrop, compare with the EU’s emerging framework in the EU AI Act (external).

Practical implications for organizations

- Embed human-in-the-loop controls for consequential decisions, especially in legal or administrative workflows [4][5][6].

- Conduct non-discrimination and bias audits; avoid any design that nudges or manipulates users subliminally [4][5].

- Map sector-specific risks (e.g., healthcare, cultural heritage, security) and apply tailored safeguards and documentation [4][6].

- Establish transparent attribution practices for AI-generated content, especially when referencing high-profile figures or institutions [4][5][6].

- Define escalation paths for content that could impact dignity, rights, or public order; document red-line use cases and kill-switches [4][5].

- Align external messaging with human-centered principles to reduce reputational risk and regulatory exposure [1][2][5].

For implementation support across functions, you can Explore AI tools and playbooks.

Legal and IP considerations

The Vatican asserts a legal framework under which the Governorate is the rights holder for certain AI-generated outputs produced in its territory, including tight control over AI content about the Pope and the Church. Legal and IP teams should monitor how such claims may influence cultural institutions and jurisdictions that steward sensitive symbols, archives, or public figures [4][6]. This is a salient test case for how attribution and economic rights in generative content may evolve.

Key takeaways for leaders

- Anchor governance in dignity and the common good; don’t rely on technical fixes alone [1][2][3][5].

- Operationalize human oversight where it matters most (courts, benefits, safety-critical domains) [4][5][6].

- Prohibit discriminatory, manipulative, or rights-jeopardizing uses as policy red lines [4][5].

- Document sector-specific controls and model provenance for sensitive content [4][6].

- Treat the Rome Call’s six principles as a practical compass for product and compliance reviews [1][2].

- Anticipate reputational scrutiny when AI mediates information and public discourse; mitigate polarization risks in design and deployment [1][3].

Throughout, the Vatican AI ethics framework appears not as a theological add-on but as an actionable governance blueprint—human-centered, values-forward, and designed to travel across contexts [1][2][4][5][6].

Sources

[1] Transcript of Father Paolo Benanti: Finding the heart and soul of AI

https://tools-and-weapons-with-brad-smith.simplecast.com/episodes/father-paolo-benanti/transcript

[2] What does the Vatican know about A.I.? A lot, actually.

https://www.americamagazine.org/politics-society/2025/01/31/vatican-artificial-intelligence-benanti-249821/

[3] Paolo Benanti: “the problem of AI is complexity” – Omnes

https://www.omnesmag.com/en/resources-2/paolo-benanti-problem-ia-complexity/

[4] Vatican City State puts AI guidelines in place | USCCB

https://www.usccb.org/news/2025/vatican-city-state-puts-ai-guidelines-place

[5] Lessons from the Vatican’s AI Guidelines – Word on Fire

https://www.wordonfire.org/articles/lessons-from-the-vaticans-ai-guidelines/

[6] New Vatican AI Guidelines for the development and use of AI models

https://ipkitten.blogspot.com/2025/01/new-vatican-ai-guidelines-for.html