Mobile Fortify facial recognition: Why ICE and CBP’s field tool is under fire

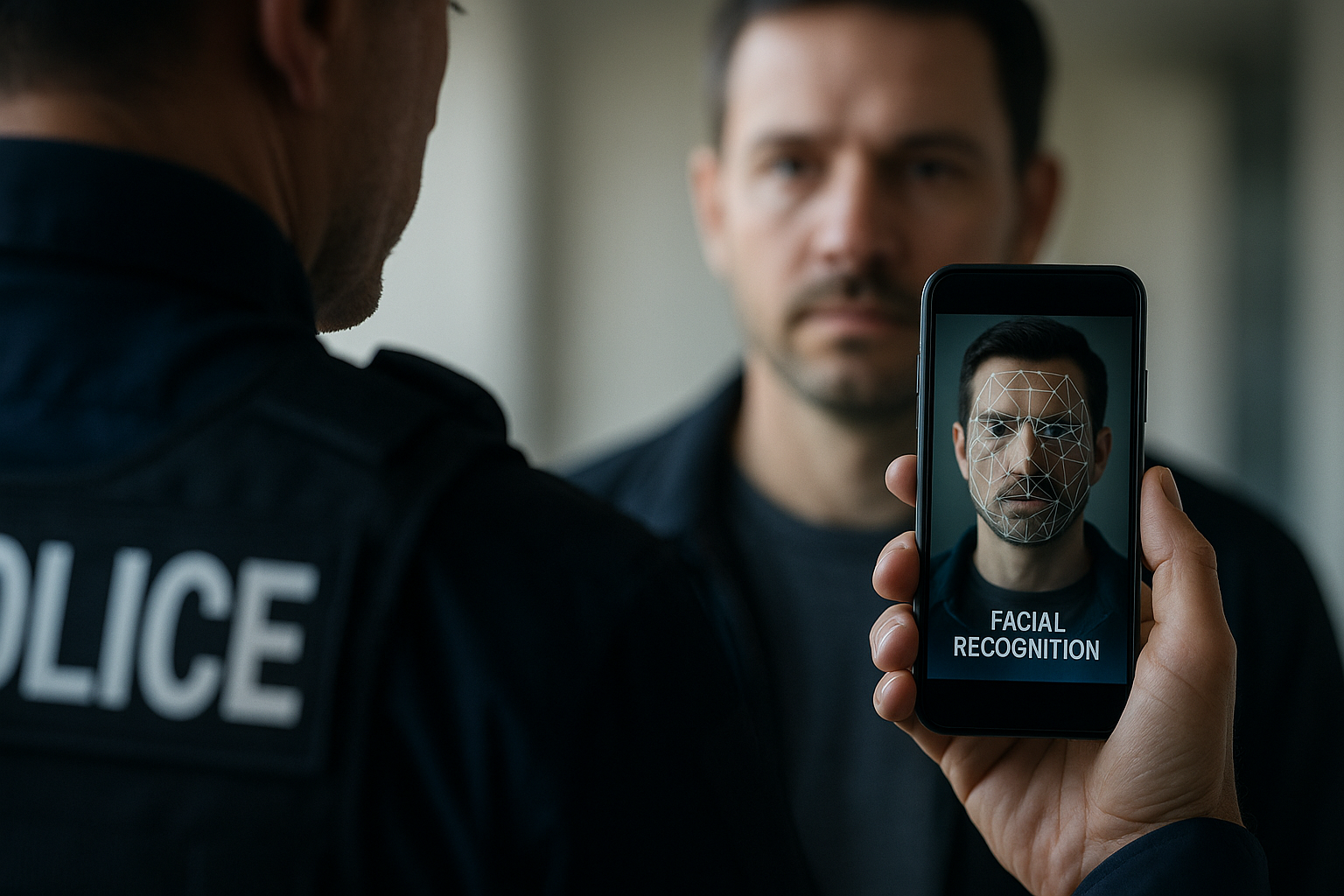

Hero image: A smartphone-based facial scan during a field stop.

U.S. immigration authorities are leaning on a mobile biometric system that promises quick answers in the field—but documented misidentifications and contested data sources suggest the tool can’t reliably prove who someone is. Investigations report that ICE and CBP deploy the Mobile Fortify facial recognition app to check faces and fingerprints against government databases, while internal descriptions frame its outputs as definitive immigration or citizenship determinations—claims that matter when high-stakes enforcement is on the line [1].

What Mobile Fortify facial recognition actually does

Mobile Fortify enables officers to point a phone camera at a person’s face, take fingerprints, and run both against multiple federal and state databases. Some of those databases have been ruled too unreliable to support arrest warrants or probable cause, yet internal DHS and ICE materials describe the app’s biometric matches as conclusive indicators of status [1]. The system appears twice in DHS inventories as a shared and operated system, reflecting cross-agency use by CBP and ICE [1]. Video reporting has also examined how ICE agents apply facial recognition in the field [2].

Documented failures and on-the-ground treatment

A misidentification documented by 404 Media and summarized by news outlets shows Mobile Fortify assigning two separate, incorrect identities to the same detained woman during a raid. Despite conflicting identification documents, agents reportedly treated the app’s outputs as authoritative in practice—an illustration of how biometric misidentification can escalate into operational errors when used as sole evidence [1][3]. In some instances, observers have noted that identity documents are disregarded if they contradict the facial recognition result, compounding risks in immigration enforcement contexts [1][3].

Scale and shift to everyday operations

Illinois and Chicago allege in litigation that DHS has used the app more than 100,000 times for face and fingerprint scans. That figure indicates Mobile Fortify has moved beyond pilot phases to widespread operational deployment across the United States [1]. As a result, any systemic error—whether tied to facial recognition algorithms, fingerprint matching, or the underlying databases—scales alongside its use, amplifying the potential for DHS biometric errors and downstream harm [1].

Legal, civil liberties, and operational risks

Advocates, experts, and some lawmakers warn that relying on opaque biometric algorithms and error-prone databases makes the tool unfit as sole evidence in enforcement actions. Internal DHS materials reported by 404 Media indicate that individuals subject to scans cannot refuse biometric collection—raising concerns about coerced capture, limited recourse, and the possibility of wrongful detention or arrest based on flawed or disputed matches [1]. Courts have already questioned the reliability of certain data sources tapped by the app, undermining the notion that a match can determine immigration status in a legally defensible way [1][3].

For context on technical benchmarking of facial recognition systems, see NIST’s FRVT program (external). While separate from DHS operations, it underscores how performance varies widely across algorithms and conditions.

What this means for organizations and policymakers

- Data provenance matters. If a tool queries sources that courts have deemed unreliable, its outputs carry legal and reputational risk when used as primary evidence [1].

- Operational safeguards are essential. Treating a single biometric match as dispositive—especially amid known misidentification cases—invites preventable failures [1][3].

- Governance must travel with the tech. As use surpasses 100,000 scans, oversight should scale accordingly, with auditable workflows and accountability for errors [1].

For broader industry coverage and governance insights, explore our newsroom’s evolving AI news.

Recommendations and next steps

- Require secondary verification: When biometric results conflict with documents or other records, mandate corroboration before detention or escalation [1][3].

- Limit reliance on contested sources: If a database has been found unreliable for probable cause, do not treat matches as definitive; document alternative pathways for verification [1].

- Establish refusal, review, and appeal protocols: Create clear procedures for contesting results, independent review, and remedy when misidentifications occur [1].

- Audit outcomes at scale: Track false positives, resolution times, and impacts across deployments to identify where and why errors occur, then adjust policy and tooling [1].

- Engage legal counsel early: Align procurement, deployment, and evidentiary use with evolving case law and litigation trends tied to biometric misidentification ICE cases [1][3].

Conclusion and further reading

Mobile Fortify has been positioned inside DHS as a decisive identity check, but reporting and litigation paint a different picture: error-prone databases, misidentifications, and no option to refuse scans—now at nationwide scale [1][3]. As agencies and observers assess next steps, the baseline remains unchanged: a single match does not equal certainty.

Sources

[1] DHS AI Surveillance Arsenal Grows as Agency Defies Courts

https://techpolicy.press/dhs-ai-surveillance-arsenal-grows-as-agency-defies-courts

[2] How ICE agents are using facial recognition to bring … – YouTube

https://www.youtube.com/watch?v=kX9LEFVr9GY

[3] How ICE is using facial recognition in Minnesota – The Guardian

https://www.theguardian.com/technology/2026/jan/27/ice-facial-recognition-minnesota